Consumers Say Tech Companies Should Be Liable for Content on Their Platforms

Key Takeaways

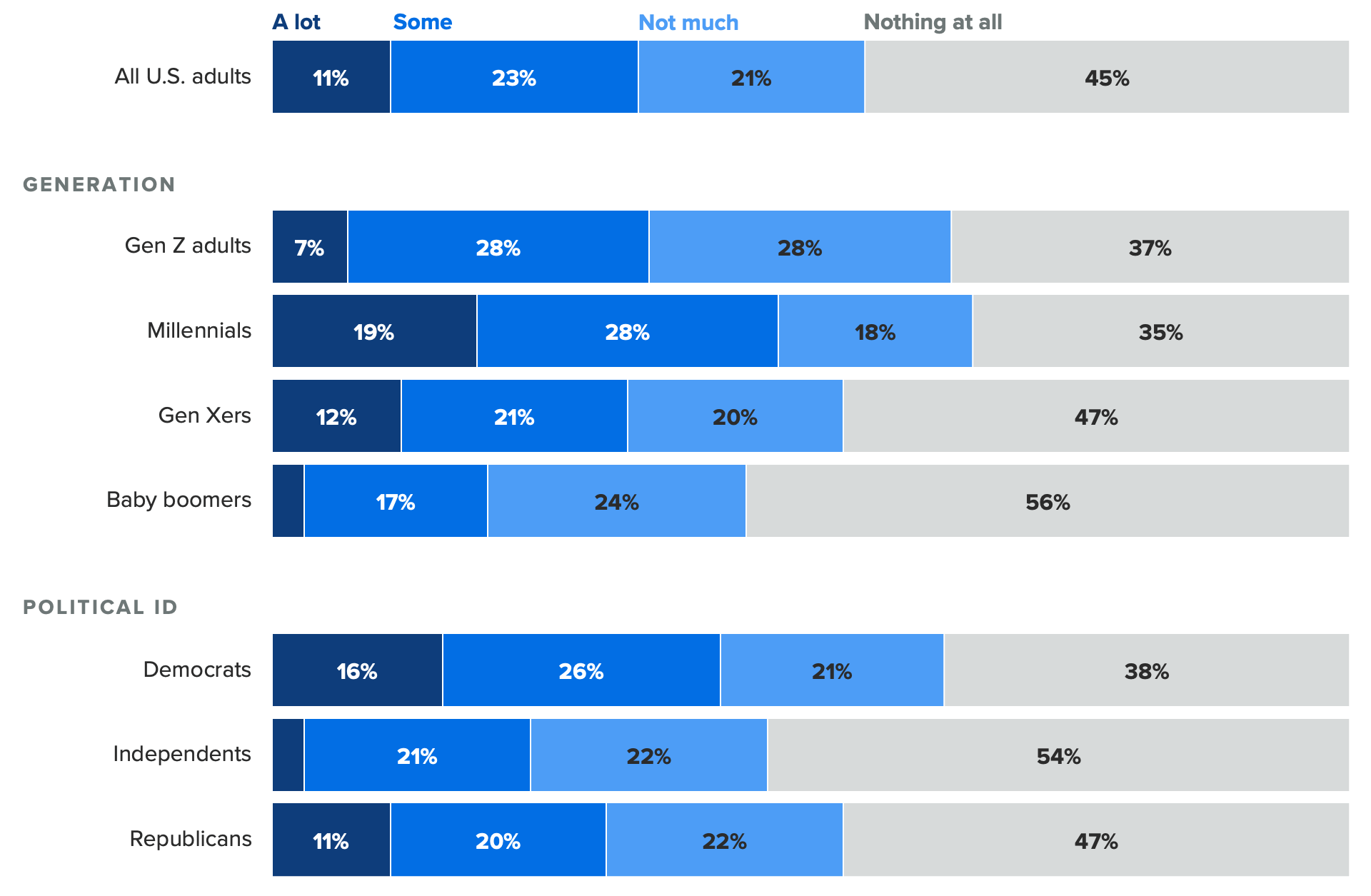

Only about one-third of consumers have heard about Section 230, and awareness of the legal provision is highest among millennials (47%) and Democrats (42%).

Consumers overwhelmingly feel that tech companies should be shouldering more legal liability for content hosted on their online platforms (67%), and support is even higher among those aware of Section 230.

Consumers largely see positive outcomes to companies being legally liable for content on their platforms, and find messages for reforming the legal provision convincing.

For the latest global tech news and analysis delivered to your inbox every morning, sign up for our daily tech news brief.

As the Supreme Court takes on whether tech companies can be held legally liable for content posted on their platforms (through Gonzalez v. Google LLC and Twitter, Inc. v. Taamneh), the future of the internet hangs in the balance. At the core of the issue is a specific legal provision called Section 230.

Section 230 isn’t yet part of the everyday lexicon, but it underpins the modern internet and will become a larger part of tech coverage this year as we draw closer to the court’s ruling.

What is Section 230 and why does it matter?

Section 230 is a legal provision within the Communications Decency Act of 1996, known as the “Protection for ‘good Samaritan’ blocking and screening of offensive material” section. In plain language, it means tech companies cannot be held liable for content that users post on their online platforms (though companies are required to remove illegal content like fraud schemes or child abuse). This legal provision has come into focus in recent years because it protects technology companies — including those that operate social media sites — from liability for misinformation or propaganda being spread on their platforms.

Section 230 says that “No provider or user of an interactive computer service shall be treated as the publisher or speaker of any information provided by another information content provider” — sometimes referred to as “the 26 words that created the internet” because without them, social media and online search would not exist as we know them. The burden of continuously monitoring all content on these platforms would become too onerous.

Consumers say tech companies should be legally liable for content on their platforms

Section 230 is admittedly an esoteric subject due to the legalese involved, and while awareness of the legal provision is not yet widespread, it serves as an interesting benchmark for where people stand on the issue before tech coverage becomes saturated with messages for and against reform.

Recent Morning Consult survey results suggest that tech companies may face an uphill battle in winning over the court of public opinion. A majority of U.S. adults (67%) said companies should be legally liable for some, if not all, content found on their platforms; that share goes up among those who have heard “some” or “a lot” about Section 230 (74%). Only 12% of U.S. adults say companies should remain completely immune to lawsuits related to content found on their platforms.

Previous Morning Consult data shows that 2023 will be a difficult year for the tech sector from a regulatory perspective, particularly around issues of censorship and content moderation. Both Democrat and Republican voters are more likely than not to support additional regulation of major technology companies, but support skews higher on the left. Three-quarters of Democrats (76%) say companies should be legally liable for some or all content on their platforms, versus 63% of Republicans. As such, tech companies face a challenge from regulators and lawmakers on both sides of the aisle.

With broad public support, lawmakers and regulators will have an easier time enacting policy that reins in Big Tech’s power. When you add the upcoming 2024 presidential elections into the mix — during which misinformation and political ads on social media are bound to take center stage — the question becomes less “Will Section 230 remain as it is?” and more “How will Section 230 change, and what will the consequences be?”

People see positive outcomes to reforming Section 230

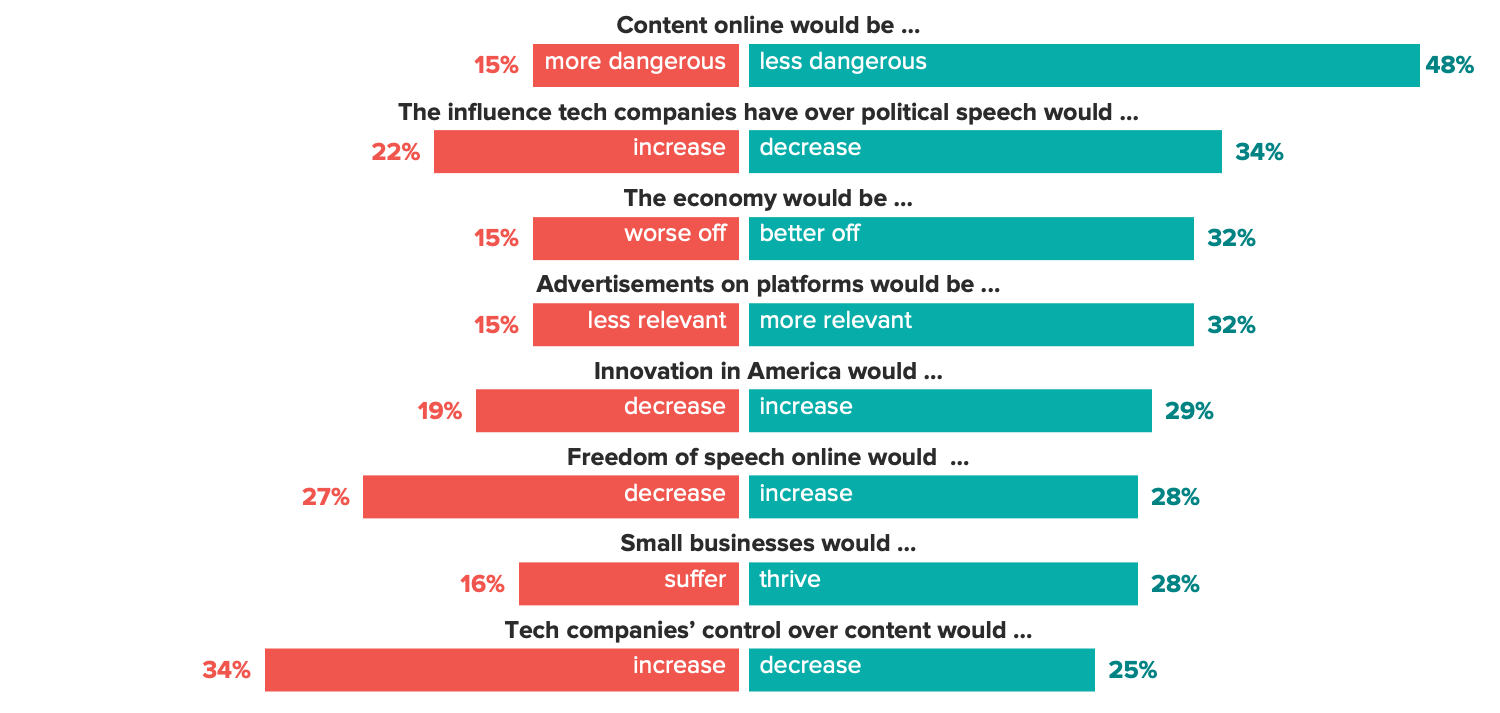

Consumers feel generally optimistic about what would happen if companies were legally liable for the content posted on their platforms. In this hypothetical reality, nearly half of adults (48%) say there would be less dangerous content online, and a third each say that the economy would be better off and that advertisements would be more relevant to them.

This largely mirrors sentiment that, generally, good things would happen if technology companies were more regulated. We are now seeing this spill over into specific provisions like Section 230, underpinning a growing desire to curb tech’s influence.

Naturally, these opinions could change as the narratives around Section 230 heat up.

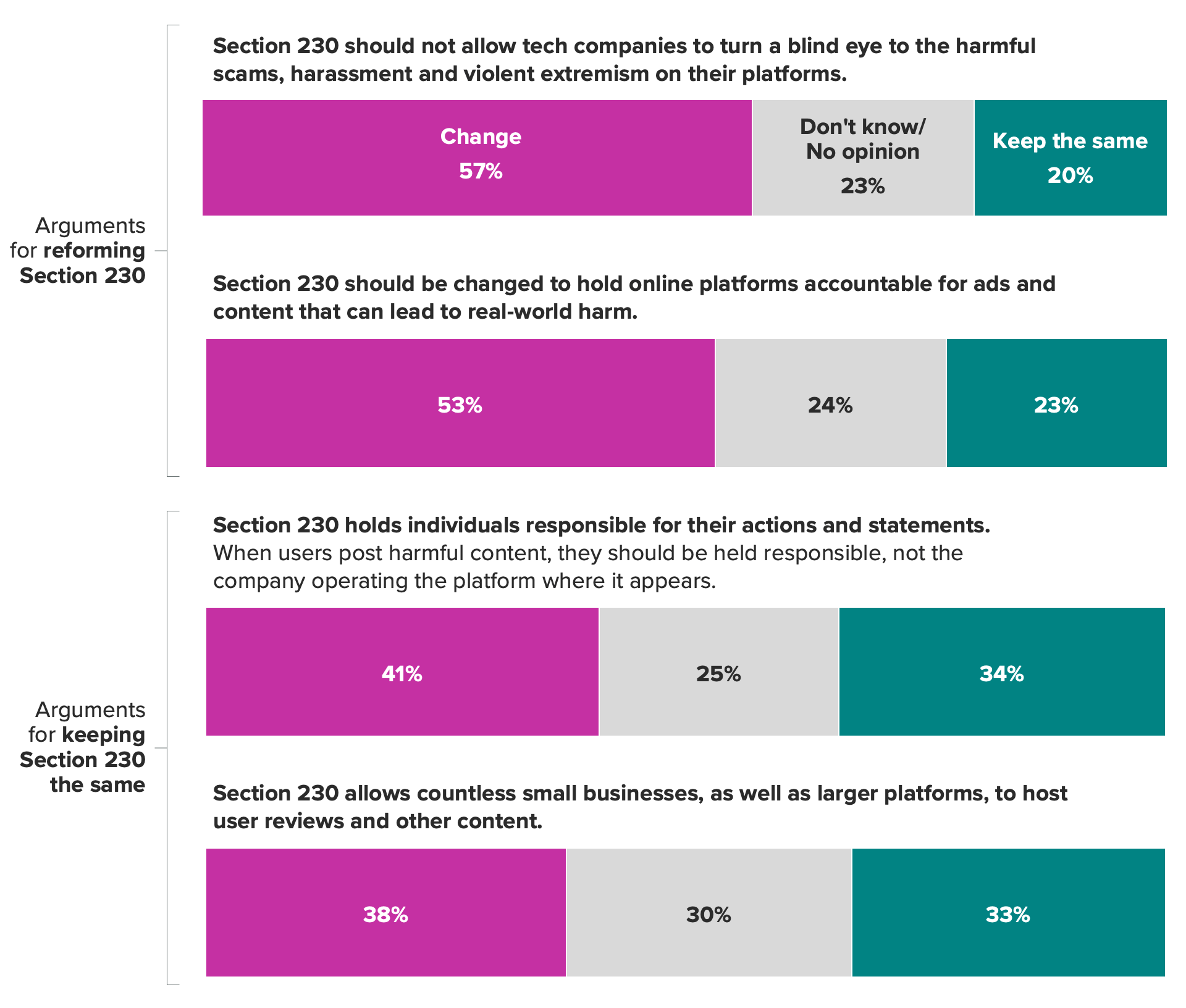

Proponents of keeping Section 230 the same argue that individuals should be held accountable for their speech, while those who support reforming Section 230 argue that online platforms are publishers, like news sites, and if the content shared on them results in real-world harm, the platforms themselves should be accountable.

If the Supreme Court decides to keep Section 230 the same, things naturally won’t change and will continue as they are.

But if Section 230 is reformed, things will get tricky. One extreme argument is that policing all content would be so onerous that tech companies would find it not worthwhile to continue running their businesses. On the other end of the spectrum, some argue that companies can innovate their way out, creating algorithms or artificial intelligence capable of determining which content is permissible and which isn’t before it is even posted, and business will continue as usual.

Like with most things, the reality is likely somewhere in the middle. Section 230 reform would likely shift liability to companies with online platforms for some forms of content and not others. AI models would be leveraged to help with content moderation, but they would need to be improved drastically to meet the moment. Tech companies could also require their users to create accounts tied to their real-world identities, and indemnify themselves from all responsibility and liability for posted content through new user agreements. Companies would become much more conservative toward the content allowed on their platforms, including advertisements, and would exercise much more control.

Until then, each side will need to battle it out.

Message testing finds that arguments in favor of reform are persuasive with consumers

For regulators and industry leaders looking to test the potential reception of their messaging as media coverage and ad campaigns heat up, Morning Consult research shows that messaging around Section 230 reform resonates more with consumers. While what was tested is naturally not exhaustive of every message for and against reforming Section 230, the language used by lawmakers to advocate for reforming Section 230 (inspired by quotes from members of Congress who support the so-called SAFE TECH Act) tends to be more successful than that of those lobbying to keep it as-is (inspired by Electronic Frontier Foundation talking points).

The appetite for tech regulation is strong among Democrats and Republicans alike. At every branch of government, both parties have expressed some level of desire to reform Section 230, albeit without a consensus on exactly what that might look like. Given the political landscape, and with public opinion trending toward more accountability for the tech industry, reality looks like some form of compromise on liability.

With so much hanging in the balance, it will be important for both policymakers and those who elect them to be adequately informed about what reforming Section 230 might mean, and what trade-offs consumers are willing to accept.

Jordan Marlatt previously worked at Morning Consult as a lead tech analyst.