Companies Developing Artificial Intelligence Are Scoring Early Wins With Consumers

This story is part of Morning Consult’s ongoing coverage of generative artificial intelligence, aimed at creating a foundational understanding of consumer attitudes on the emerging technology.

Read more of our coverage: Anxiety Over AI | AI Marketing Language | AI in Hollywood

Key Takeaways

Awareness and interest in a wide swath of AI applications have increased in just the last month, including double-digit jumps for AI-generated menu recommendations at restaurants (+13 points to 53% interested) and AI-powered therapy and life coaching (+10 points to 40%).

Consumers are putting the onus of ethical AI development on the companies and leaders that create the programs, and are less likely to attribute responsibility to the government or users of AI.

Trust in generative AI outputs is up from a month ago, but concerns over misinformation and bias in results persist.

Artificial intelligence is already piquing more Americans’ interest, according to Morning Consult trend data.

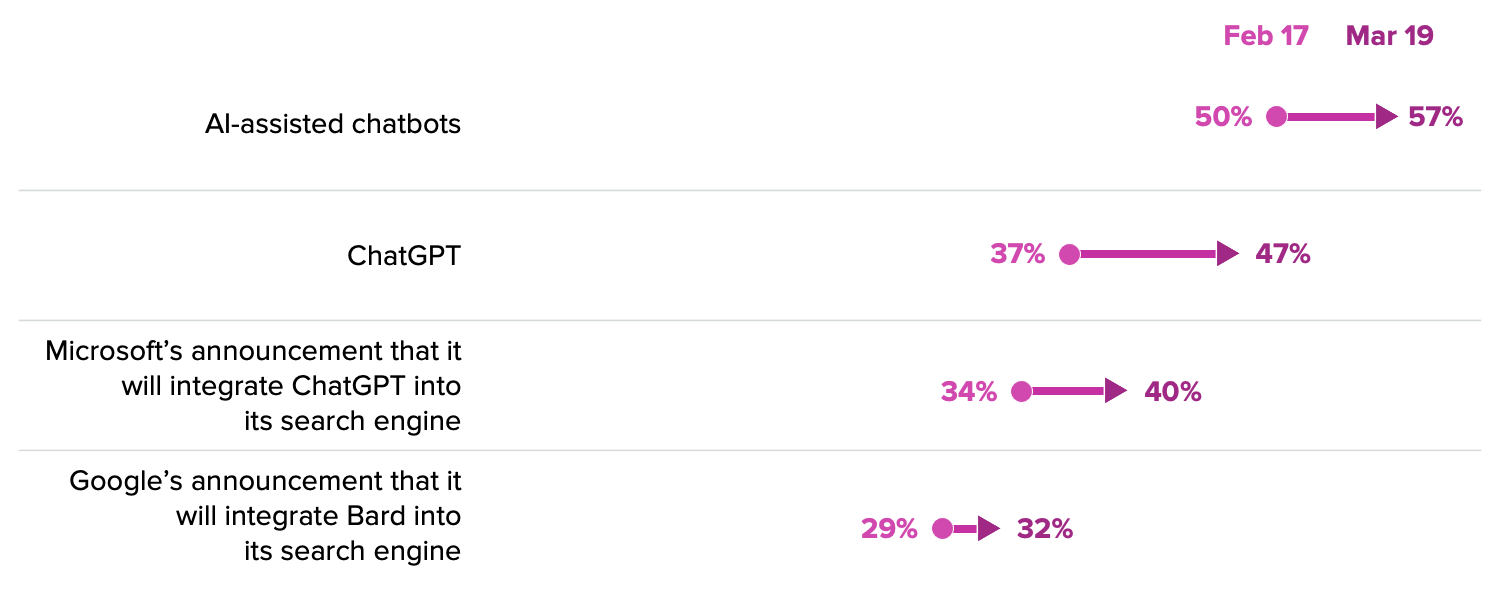

Part of the curiosity bump in AI-supported or AI-generated applications is simply due to increased awareness of the emerging technology. Headlines over the fight for AI dominance between Microsoft and Google have continued, as Google last week opened up access to Bard — its AI chatbot competitor to ChatGPT — and Microsoft announced that it would integrate the AI-powered image generator DALL-E into its Bing search engine.

According to a recent Morning Consult survey, 57% of consumers said they have heard of AI chatbots in the news, up from 50% just a month ago, and 47% reported hearing about ChatGPT in the news specifically, a double-digit jump during the same time period. In the battle for clout, Microsoft has the advantage, but new AI tools will need to be relevant and helpful to consumers — as well as be developed responsibly — in order to be successful.

Interest in AI applications is up across the board

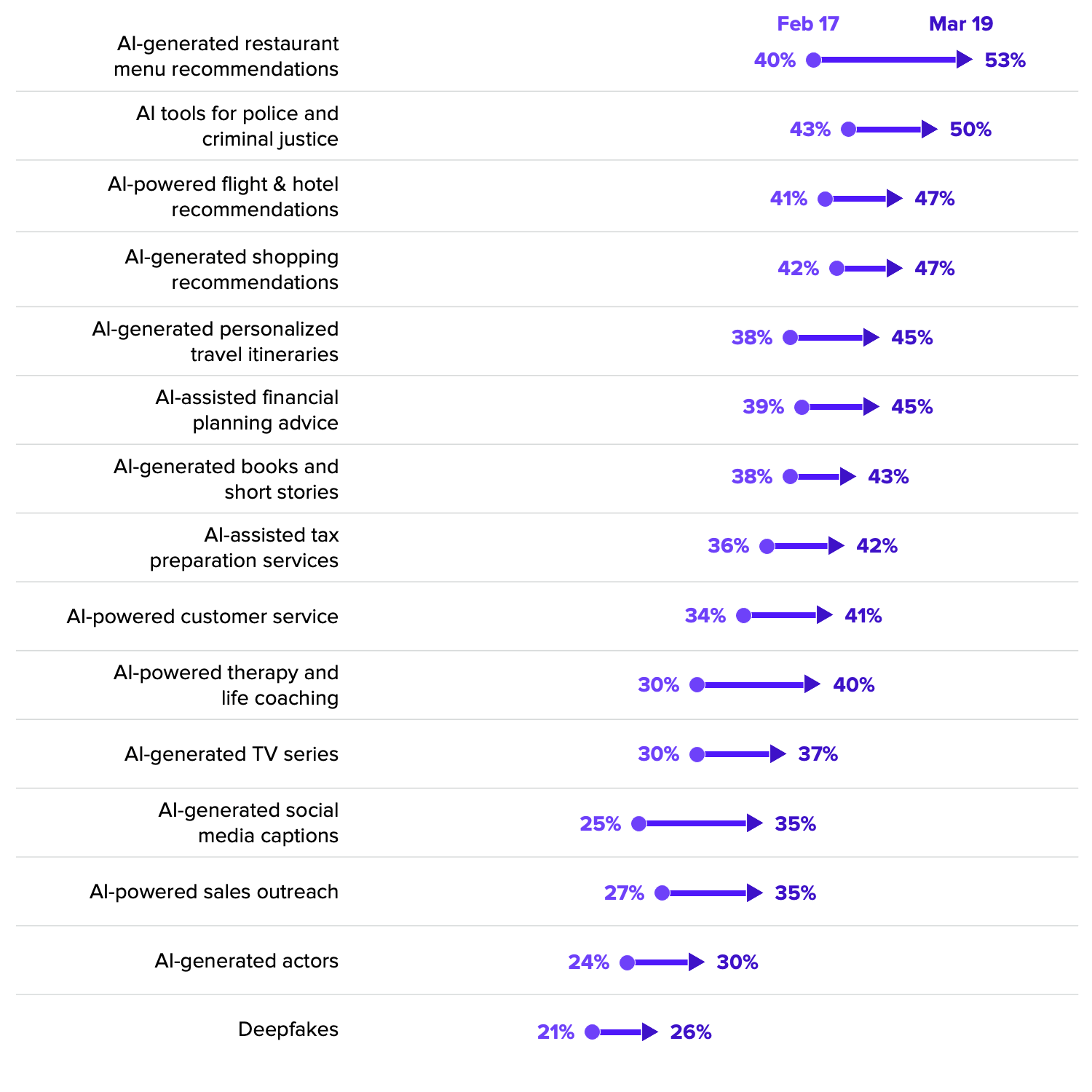

With heightened awareness of AI tech among consumers, interest in dozens of different AI applications is also up, from AI-powered flight and hotel recommendations to AI-generated financial planning.

Average interest across the applications included in the survey is up 4 percentage points since February. The categories seeing the most growth are: AI-generated menu recommendations at restaurants (up 13 points to 53%), AI-powered therapy and life coaching (up 10 points to 40%), and AI-generated social media captions (up 10 points to 35%).

The fact that interest in AI applications is not siloed to a single or few categories shows that there is broad interest in the technology and how it might change many facets of everyday life.

As companies adopt AI models and integrate them into their existing products and services, they will be wise to carefully thread the needle of applications that automate help — shown to be popular among consumers — but don’t enter the “uncanny valley” by substituting a human connection.

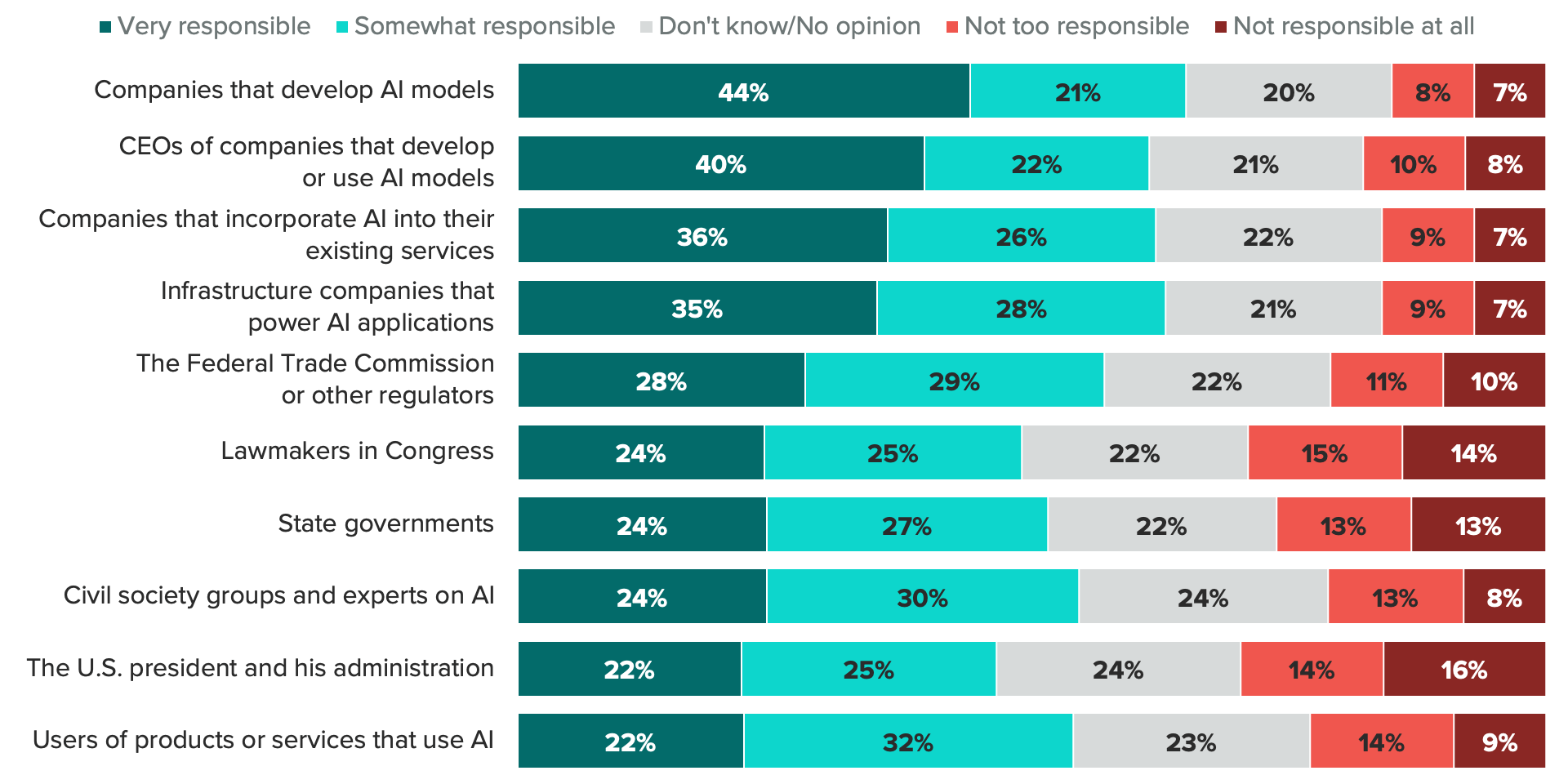

Consumers say companies that are developing AI should shoulder responsibility for ethical development

Behind all the excitement and daydreams of how AI might help us is the shadow of the harm it might also cause. These very real concerns, as reported in previous Morning Consult analysis, are seemingly understood by the biggest players in the AI space.

Microsoft has published governing principles for AI development (though it reportedly laid off its AI ethics team earlier this month) and Google noted in its announcement of expanded access to Bard that the model has the potential to provide misinformation. Even the CEO of OpenAI, the company that develops ChatGPT, has expressed concerns over the direction of the technology.

As companies develop new AI models, they are also shouldering responsibility for its ethical development. Nearly 2 in 3 (65%) consumers said companies that develop AI models bear at least some responsibility for doing so ethically. Infrastructure companies, companies that use but don’t develop AI and the CEOs of AI developers are also at the top of the list. The private sector is more likely than the government and regulators to be seen as having responsibility for ethical AI development, just after the Federal Trade Commission warned companies to keep their “AI claims in check.”

As AI interest grows, so does trust

Even as interest and awareness of AI tech increases over time, concerns over misinformation and bias in AI search results persist. Two in 3 U.S. adults said they are concerned about the accuracy of AI search engine results, and 69% are concerned about potential misinformation included in results from search engines that use AI.

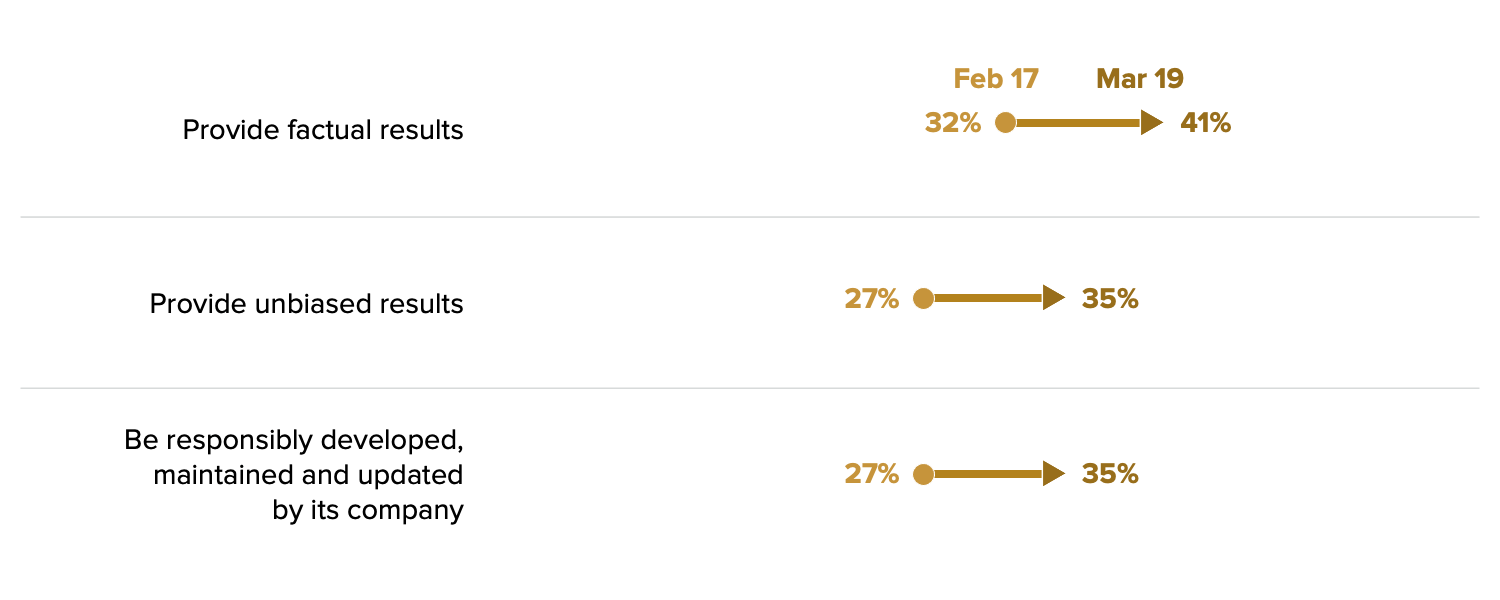

That being said, as people become more familiar and even accustomed to the idea of AI in search, the share who trust AI is increasing over (a relatively short period of) time. More than a third (35%) of consumers completely or mostly trust AI search to provide unbiased results, up from 27% a month ago, and trust in companies to develop AI responsibly is also up 8 points.

AI is on the right path to consumer adoption — for now

Unlike with Web3 or the metaverse, consumers are showing increased interest and trust in AI as an emerging technology. Companies at the forefront of developing these AI models are trying to achieve a difficult balance between maintaining momentum in rolling out new products or applications and not moving so quickly that they risk weakening consumer confidence with a faulty chatbot. Developing AI responsibly will be critical to building that trust, and so far, consumers are warming up to the idea of AI as a presence in their everyday lives. But it’s still early days, and we’ve yet to see everything that AI has to offer — both the good and the bad.

Jordan Marlatt previously worked at Morning Consult as a lead tech analyst.